TechnologyMarch 14, 2026

Solution Architect’s Guide to Driving Manufacturing Insights from AI

AI does not create manufacturing insight. It consumes structured information and returns analysis. If the underlying data is inconsistent, undocumented or implicitly defined, the results will be unreliable regardless of how advanced the analytics appear to be.

AI in manufacturing fails for one simple reason: inconsistent data structure. Predictive maintenance, quality analytics and digital twins depend on standardized, versioned and semantically consistent data models. A Master Data Model defines the meaning, units, hierarchy and governance of manufacturing data so AI systems can consume it without guesswork. Without this foundation, analytics become brittle, integrations multiply and plant-to-plant comparisons break down. This guide explains what a manufacturing data model is, how to architect it, how to govern it and how to expose it securely so AI systems can operate with accuracy and scale.

Summary

In the earliest manufacturing systems, data context was implicit. A designer/programmer built systems with implicit understanding of the formats, data types and other attributes of data passed from a manufacturing system to an application. While standards exist, we rely too much on implicit and a priori context. Today, for an AI model or any other application to properly consume and utilize data, it must have clear, consistent and standardized data models. This paper describes what those data models are, how to construct and locate them and how to provide access to them.

Key Points

- Standardized data models are critical to the success of digitalization and Smart Manufacturing.

- Data Models must be organized properly in response to the specific problems faced by the manufacturer

- No matter where data models are stored, applications must be provided with standard IT-friendly access mechanisms.

- Standardized and consistent metadata is a benefit to the successful deployment of data models.

- Integrating data models with the data product (a filled-in instance of the data model) provides AI systems with the ability to provide the most value.

Definitions

Smart Manufacturing – A data-driven approach to optimizing production processes and creating manufacturing processes that enhance efficiency, quality and flexibility.

Data Model – A collection of related attributes of a manufacturing system described by some technique such as a User Defined Type (UDT), a database schema, a set of variables structured in code or even a paper diagram.

Data Schema – The formal structure of a Data Model, defining how the data is organized, stored and related.

Data Model Instantiation – Assigning data model elements to specific memory locations in a production control system, such as a tag in a PLC, a register in a Modbus TCP device, an attribute value in an OPC UA server or the present-value property in a BACnet device.

Data Product – A Data Product is an instantiated data model with current data values for some or all the data elements in the data model.

Metadata – Descriptive data associated with a data item in a data model.

What is a Manufacturing Data Model?

A data model in manufacturing is simply a set of related elements (variables, points, registers) in a production control system. This definition differs slightly from that of a data model in an enterprise system.

A data model in enterprise applications is typically a diagram of a software system and its data elements. It is most often used to visualize the various elements flowing in and out of a database.

A data model in a manufacturing system defines a set of elements in a production control system that are related to each other. A data model for pump maintenance, for example, may organize cycle counts, run times, energy usage, asset identification and location data for a set of pumps.

Manufacturing data models can be simple or very complex. A single temperature can be a data model if it meets the needs of a consumer of the data product (an instance of the data model containing actual data). A complex data model can have tens or hundreds of related elements organized hierarchically. Hierarchical data models are used to describe more sophisticated relationships among the elements.

Why Are Standardized Data Models Critical to Smart Manufacturing Systems?

Smart Manufacturing systems that deploy company-wide, standardized data models are simpler to maintain, easier to extend and reduce the cost of integration. Standard data models allow AI systems to easily consume data, understand its context and provide sound analytic judgements. Applications that rely on inconsistent or implicit data definitions are brittle, prone to failure and difficult, if not impossible, to maintain.

Smart Manufacturing systems cannot be successful without standardized data models. Without standardized data models, applications cannot properly consume data. At the most basic level, a value of “50” can be interpreted as 50 centigrade, 50 hertz or 50 psi. Without a data model, an application must use other, a priori, mechanisms for data interpretation. Implicit definitions like that are undocumented, difficult to extend to additional applications and cause chaos when manufacturing data values are misinterpreted.

How Should Designers Architect Manufacturing Data Models?

Data modeling begins with the unique needs of the organization – the problems that must be solved. Data models can be built to optimize uptime, reduce waste, enhance maintenance or address the organization’s specific problems and priorities.

Begin with the decisions the operations or management team needs to make, and work backward to all the data values that are integral to those decisions. Once what’s required is known, identify the names, units, data types and semantics of each element of the data model. This is where Temp21 becomes “Oven 21 Current Temperature” Fahrenheit and an integer.

Data models like this provide a template for a data collection device to translate the raw physical signal into the data needed by the model.

What is Metadata and Why is it Important?

Metadata (sometimes known as properties) is static, descriptive information that provides a more complete understanding of a data element. Data elements in most production control systems are, by themselves, completely undecipherable (Register 40202) or mostly undecipherable (Tag Speed44). Without metadata, these data elements are often useless unless knowledge is communicated implicitly or via an external mechanism.

There are multiple types of metadata. Metadata can be:

- Descriptive, such as “Section 2 Pressure”.

- Structural, such as data type, max/min and array dimension.

- Administrative, such as last read time, display name and facility name.

Since metadata is static, it can be created when the data model is created or when the data model is instantiated with tags from the production control system. Any metadata known and available when the data model is created should be associated with it. Any other metadata known by the control system personnel should be assigned to the data model when it is instantiated.

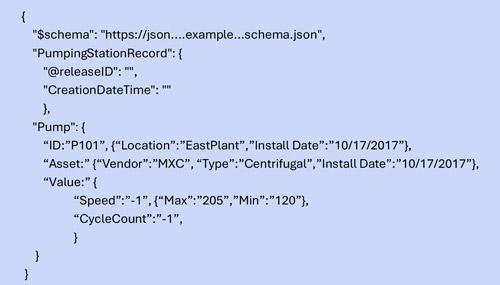

Figure 1 – Partial List of Pump Data Model with Static Data

How are Data Models Organized?

Data models are simple hierarchical lists of data elements and static metadata. Figure 1 illustrates a portion of a data model for pumps written in JSON notation. The pump section includes identity, asset and value sections that are each further described with metadata that is known at the time of data model creation.

While JSON is one of the better ways to organize manufacturing data models, models are commonly implemented using many different mechanisms:

- In programmable controllers, models are organized using User-Defined Types (UDT.

- In databases, models are organized using schemas that define the contents of each table.

- In an Excel spreadsheet, the columns are organized such that successive columns indicate hierarchy.

- In text files, models are typically formed using JavaScript Object Notation (JSON).

Where Should Data Models and Metadata Be Stored in a Manufacturing System?

Data models must be located where every application consumer can access them. There are no data governance rules specifying how the models should be organized, but the file containing these master data models must be available to every application that wants to use them.

Keeping data models in a single place enhances the data architect’s ability to maintain them and provide versioning when the models are modified.

Ten Recommendations for Using Data Models in a Manufacturing System

- Create Company-wide Standard Models Appropriate to Your Manufacturing Operation.

Use standard models tailored to your industry and specific manufacturing operation. Make them widely available throughout your organization to promote interoperability throughout the plant and the enterprise. Storing models within applications or failing to use standard models results in reduced efficiency and chaos throughout an organization. - Use Standard Protocols and Open APIs.

Standards are the key to reducing ambiguity, increasing maintainability and eliminating the chaos of bespoke systems. ISA 95, ISA 88, OpenAPI, ISA 101, PackML and other standards create common languages and models that connect different factory floor systems, reducing the chaos caused by non-standard information transfer. - Define Data Models That Focus on the Priorities of Your Manufacturing Operations.

Data models have one ultimate application: to enhance the operation of your manufacturing production systems. Start with the end in mind by defining the problems to be solved, the data elements required to solve them and building those data elements into data models. - Define Clear Ownership and Governance. The meaning of your data must be clear and consistent throughout the organization. Using different data models is like everyone using a different OEE formula. It’s chaos. Designate a team or individual to manage data models, including change control, approval workflow and lifecycle management.

- Secure Your Data Models

Because data models are central to much of your manufacturing operation, write-access to the data models must be strictly regulated, while read-access should be widely available. - Don’t Consider Data Models as Fixed

Data models, like manufacturing operations, are not fixed. New models will be added, old models revised and models that are no longer applicable will be removed. Add identity, description and versioning metadata to all your data models. -

Figure 2 – It is valuable to expose Time Series data at Levels 1 and 2.

Model Both Transactional and Time-Series Interfaces

While traditional systems only model transactional interfaces at L3 and L4 of the architecture (Figure 2), there is also value in exposing the data models for time-series data collection at L1 and L2. Many of the same recommendations apply to both time-series and transactional data models, but you can choose to use different master data models for each. You should include models for all PLC UDTs in the L1 and L2 model. AI systems must be able to recognize time-series data generated by these UDTs. - Provide Standard Ways to Access Your Data Models

Integration can be simplified when standard APIs are available to access data models. Consistent, standard APIs are difficult to achieve. Instead, use tools like Swagger, for OpenAPI, to document synchronous APIs and enable standard access to the underlying data. AsyncAPI is another tool that can document asynchronous APIs in Event Driven Architectures (EDA). - Create Metadata When the Data Model is Created and When it is Instantiated

Any metadata known when the data model is created should be incorporated into it. At instantiation, a standard set of metadata derived from the control systems team’s knowledge should be included with the model. Metadata such as deadband, enabled, time value quality, unit of measure and such are immensely important to AI data models. - Enforce Enterprise-Wide Naming, Units, Time Conventions and Semantic Standards Across all Models.

Inconsistent standards and semantics undermine interoperability and lead to silent failures and hallucinations. Oven21Temp vs Oven_21_Temperature breaks AI models.

FAQs

Why is data modeling important?

Data modeling is vital for organizations that wish to be data-driven. You cannot be data-driven unless you have thought through what data you need, how to model that data, how to organize and maintain those models and how various applications will consume and use that data.

What is the advantage of organized collections of data models (master data model concept) vs. data models that are embedded in a software application?

Master Data Models (MDM) are maintained in a single place (database, SharePoint file, Excel spreadsheet, etc.), separate from applications, to ensure consistency across all the related applications that use them. MDM-based models can be updated and versioned in only a single place and new versions are immediately available to all the applications that use them.

What is the difference between a schema and a data model?

A schema is the definition of the data model. It is important to the applications receiving the model validate that it is properly formatted. A consumer can match the model name and version to the original schema to validate that the model is properly formatted.

How is data modeling related to the concept of a Unified Namespace (UNS)?

A UNS is a data exchange mechanism for manufacturing data. To be effective, the UNS must exchange instantiated data models.

How do master data models relate to PLC User-Defined Types (UDTs)?

Master data models are logical definitions of structure and meaning. PLC UDTs are physical implementations of those models inside control systems. The UDT should be built to conform to the master data model, not define it. If PLC structures become the de facto master, enterprise scalability breaks. This reinforces architectural separation and governance.

What happens when different plants use different naming conventions or units?

Without semantic consistency, enterprise AI models require custom mapping per site. That destroys scalability, increases maintenance costs and inhibits plant-to-plant operational comparisons. A centralized master data model enforces: naming conventions, unit standards, time conventions and asset hierarchy rules.

Company-wide standards matter.

How do data models support AI training and model accuracy?

AI models require structured, labeled, contextualized inputs. Data Models provide:

- Defined units

- Known ranges

- Quality indicators

- Asset context

- Version traceability

Without structured models, AI systems must infer context. Inference introduces error, drift and unreliable predictions.

Conclusion

AI does not create manufacturing insight. It consumes structured information and returns analysis. If the underlying data is inconsistent, undocumented or implicitly defined, the results will be unreliable regardless of how advanced the analytics appear to be.

The foundation of Smart Manufacturing in 2026 is not the AI model. It is the standardized manufacturing data model that defines meaning, context, structure and access.

Manufacturers that build company-wide master data models, apply consistent metadata, expose models through standard APIs and secure them within a disciplined architecture create systems that are maintainable, extensible and AI-ready.

Those that rely on implicit definitions, scattered schemas and application-embedded models create brittle integrations that do not scale.

A brief implementation checklist to follow:

- Start with the decisions the business must make.

- Define structured, standardized data models to support those decisions.

- Attach complete metadata at creation and instantiation.

- Store models centrally with version control.

- Expose them through secure, IT-friendly interfaces.

- Integrate models directly with their instantiated data products.

When manufacturing data is intentionally modeled and architected properly, AI systems can finally operate with clarity rather than guesswork. Insight does not begin with algorithms. It begins with disciplined data modeling.

Resources

Video: Master Data Modeling for Level 2

View video to learn more.