TechnologyMay 5, 2026

Legacy CIP Networks in the Ethernet Era

Reducing downtime risk in hybrid OT environments, even as legacy Common Industrial Protocol (CIP) networks still anchor thousands of production lines worldwide.

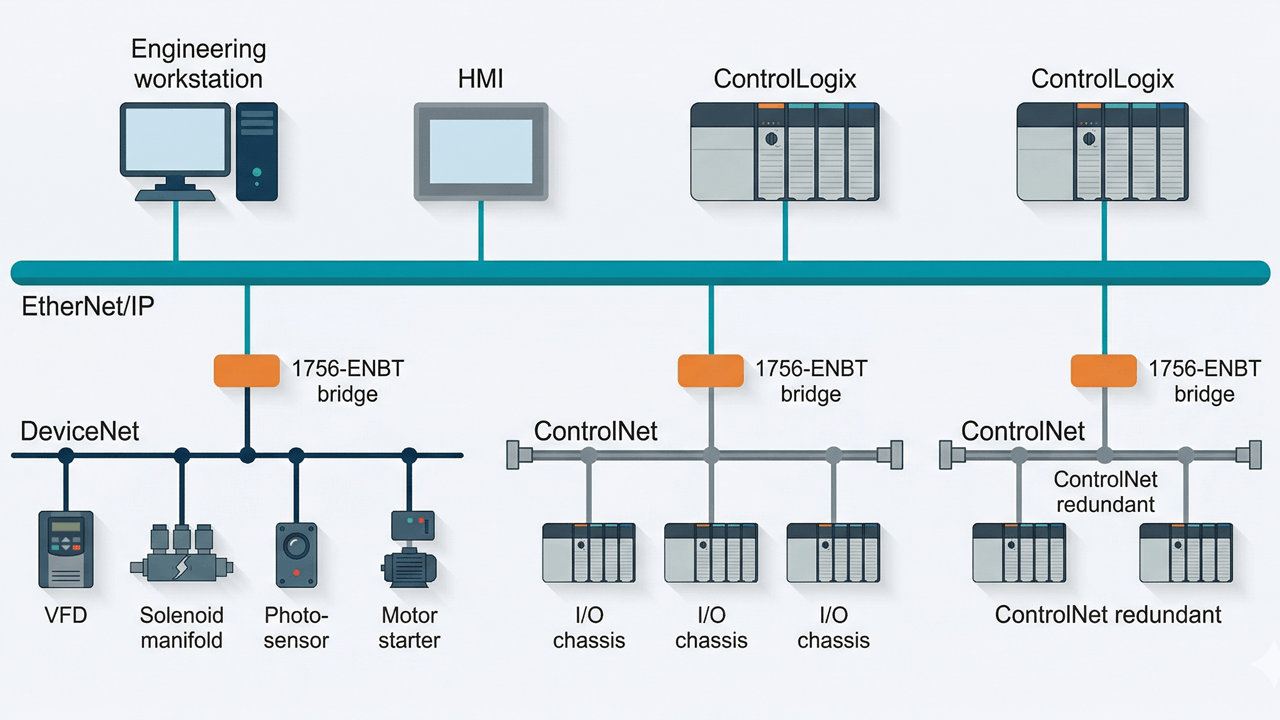

Typical hybrid OT architecture (above). Modern Ethernet/IP backbone coexists with DeviceNet and ControlNet segments that remain mission-critical in installed plants.

DeviceNet and ControlNet still anchor thousands of production lines worldwide. Before migration becomes unavoidable, maintenance teams can materially reduce unplanned downtime through disciplined baselining, physical-layer verification, a tight spares policy, and rigorous change control.

The Problem in Plain Terms

Walk into a mature discrete-manufacturing plant almost anywhere in the world and you will find the same picture: a modern Ethernet/IP or PROFINET backbone carrying supervisory traffic, and beneath it, quietly running the actual production, a patchwork of DeviceNet and ControlNet segments that have been in service for fifteen years or more. These legacy Common Industrial Protocol (CIP) networks were never retired. They were wrapped.

The reasons are well understood. Full protocol migration means re-engineering I/O topologies, revalidating safety interlocks, retraining maintenance crews, and accepting a production outage that most plant managers cannot justify while the existing network is, technically, still running. The result is a hybrid architecture that works until the day it does not.

The failure modes are not dramatic. They are slow, intermittent, and expensive: a scanner that drops a node once per shift; a ControlNet segment whose NUT margin has eroded below recommended thresholds; a DeviceNet trunk where the 24 V power budget has been stretched across one too many drop lines. None of these will trigger a protective stop on a good day. On a bad day they compound.

Why Legacy CIP Persists

Three factors keep DeviceNet and ControlNet alive long past what the original roadmap predicted. First, asset longevity: drives, valve banks, weigh modules and motor starters specified in the mid-2000s still meet their process duty. Second, the integration cost of mixed-vintage PLCs — a working program in a ControlLogix or SLC platform is rarely refactored without a compelling reason. Third, and most often underestimated, institutional memory: the electricians and technicians who commissioned the network know where the terminators are, which drop is flaky in summer, and which node number was skipped. That knowledge is not in the documentation.

“Legacy CIP segments rarely fail outright. They degrade — and the degradation shows up as unexplained downtime long before it shows up on a scanner log.”

The Failure Pattern

Field data from audits across food-and-beverage, automotive, pulp-and-paper and mining sites shows a consistent pattern. The symptoms that precede a legacy-CIP production stop are almost always physical-layer issues that a protocol-layer tool cannot see. Specifically: shield continuity broken at a panel re-termination; trunk length extended during a line modification without recomputing budget; terminators of the wrong impedance fitted during a maintenance window; power-supply drop exceeding the V/A budget for the active device count.

These issues do not generate hard faults. They generate CRC counters that tick upward, retries that lengthen scan times, and occasional node disappearances that are logged and forgotten. Classic correlation: in every audited plant where legacy CIP was a top-three downtime contributor, the maintenance team had no baseline reference for what “healthy” looked like on that network.

Establish a Baseline Before Anything Else

The single most useful action a maintenance team can take on a legacy CIP network is not migration, not redundancy, not a managed-switch refresh. It is to measure the network once, carefully, while it is running well, and keep the record.

A useful baseline captures, for each segment: node inventory with firmware revisions; physical topology including trunk length, drop lengths, terminator locations and power-tap positions; signal quality metrics (NUT on ControlNet, signal margin and error counters on DeviceNet); and a traffic profile at steady state. None of this requires exotic instrumentation. It requires discipline and a Saturday.

The value is asymmetric. When the line stops at 02:40 on a Tuesday and the question is “did something change?”, a baseline answers it in minutes. Without the baseline, the team is troubleshooting a moving target under production pressure.

Physical Layer Discipline

Legacy CIP media is forgiving up to a point, then it is not. Four practices separate sites that run for a decade on the same infrastructure from sites that do not. Treat the physical layer as a controlled asset: every re-termination, every added drop, every power-supply swap is a change that must be recorded against the baseline. Verify terminators by part number, not by presence. Keep trunk and drop lengths inside specification even when temporary cabling is pulled for a trial run; temporary becomes permanent faster than anyone expects. And budget power explicitly: DeviceNet in particular tolerates marginal current for a long time before it punishes you.

Technician testing DeviceNet trunk terminators and signal margin during a scheduled maintenance window, control cabinet open, handheld network analyser in view.

Spares Strategy and Change Control

Spares for legacy CIP are no longer stocked by most distributors. Plants that operate DeviceNet scanners, ControlNet modules and 1756-series bridges should maintain a spares policy that acknowledges this: identify the critical modules per line, hold tested spares on site, and verify annually that the spare works — not just that it is in the cabinet.

Change control is the partner discipline. Many intermittent faults traced to legacy CIP originate in a change that was made correctly but was not documented: a drop rerouted around a new conveyor, a node address reused after a scrapped machine, a power tap added without adjusting the supply. A change log that ties every physical modification to the baseline turns a four-hour investigation into a five-minute lookup.

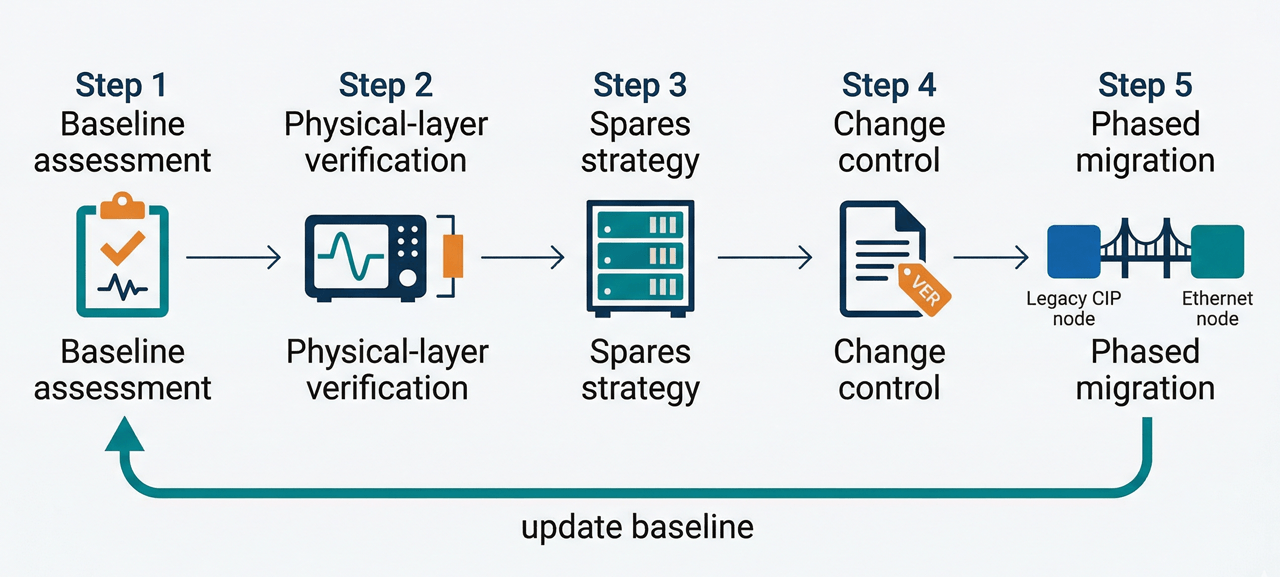

Phased Migration, Not a Big-Bang Project

Migration from DeviceNet or ControlNet to EtherNet/IP is eventually unavoidable, but it does not have to be a single project with a single shutdown. The pragmatic path is segment-by-segment: identify the legacy segment with the highest downtime contribution, plan its migration into the next scheduled maintenance window, validate in parallel where possible using bridges or gateways, and cut over when the new segment has demonstrated equivalent or better signal quality against a fresh baseline.

This approach respects two realities. Production will not stop for the convenience of the network plan. And migration budgets are almost always approved in increments, not in lump sums. A documented, measured, segment-level plan survives budget cycles that a big-bang proposal does not.

Figure 3 — A practical framework for legacy-CIP reliability.

Sequential workflow: baseline assessment → physical-layer verification → spares policy → change control → phased migration. Each block feeds into the next and loops back to the baseline record.

What to Expect

Plants that apply these four disciplines — baseline, physical-layer control, spares and change management, phased migration — consistently report a reduction in legacy-network unplanned downtime in the range of 30 to 60 percent within the first year, without capital expenditure on replacement hardware. The gain is operational, not technological. The equipment is already in place; what changes is the rigor with which it is managed.

Legacy CIP is not the enemy. Unmanaged legacy CIP is. For every plant that will eventually migrate to an all-Ethernet topology, there are several more where the installed base will carry production for another decade. The work of the next five years is to make that decade predictable.